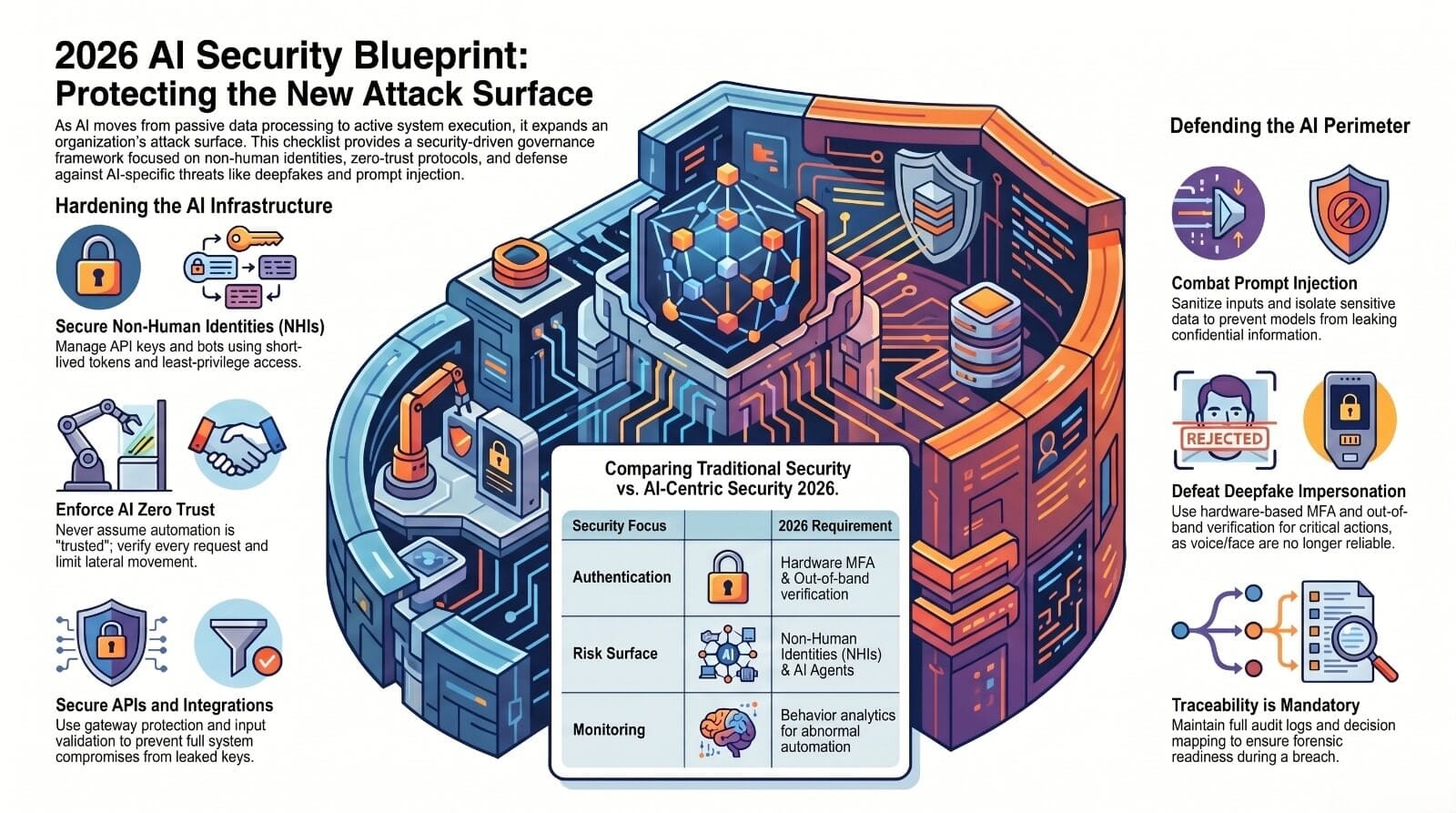

Now machines decide without waiting. They handle private details across huge systems. Because of that power, hackers aim there first. Old safety rules cannot keep up anymore.

Start anywhere – AI belongs in security planning like power grids do. Slot it into risk checks, list it with key assets, tie protection to early blueprints instead. Skip that base layer? Weak spots spread through everything after. Foundations matter most when they’re already broken.

The Foundation of the 2026 Plan

Hidden identities like apps and scripts often hold strong permissions. To protect these, strict entry rules help. Change passwords all the time instead of rarely. Watching them nonstop spots odd actions early. Machines acting alone need tight oversight.

Whatever handles tasks automatically still needs strict checks. Just because a system runs by itself doesn’t make it harmless. Risk hides where trust goes unchecked – even in code that acts alone.

Stopping fresh threats means checking data before it enters the system. Because weak inputs open doors, every prompt must pass verification steps first. When third-party tools connect, they need strict access checks – no exceptions. Fast replies can leak info, so timing must be managed carefully. Security fails if one piece gets ignored, yet each layer works better when combined silently behind scenes.

Start with clear paths through interfaces so every choice leaves a mark. Watch how users move because seeing each step matters just as much as the destination. Build guardrails that show who did what, when it happened. Let people stay involved by making actions visible, not hidden behind layers. Shape designs so they answer questions before anyone has to ask.

The Future of AI Governance Through Automated Control.

Right now, those who move first shape what safety looks like ahead. Those lagging behind react only when problems hit – blind until it’s too late.